Executive Summary

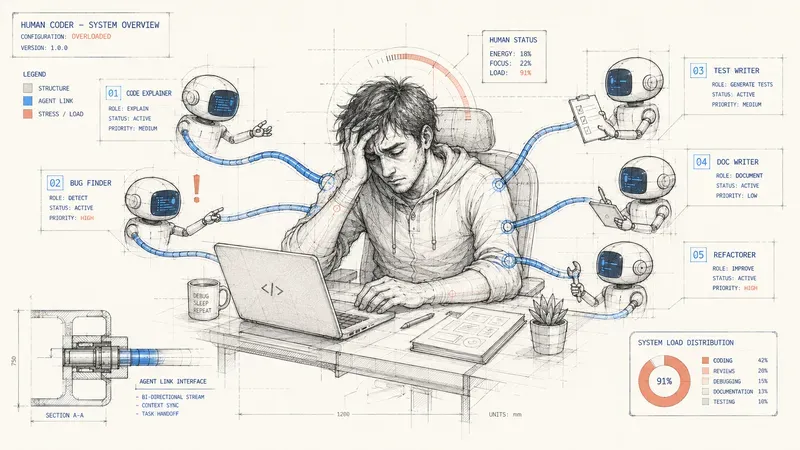

Observation: AI coding workflows do not all create fatigue in the same way. Vibe coding wears attention down continuously, autonomous agents move the effort to review, and plan mode concentrates human judgment earlier.

Thesis: The central problem is neither token cost nor the raw quality of the tool. It is the distribution of human attention across planning, generation, implementation, and review.

Critical point: When attention arrives too late or stays diffuse for too long, developers rubber-stamp, miss weak signals, or eventually lose the feeling that they are building anything.

Implication: A good AI workflow does not try to remove developer judgment. It places it at the right moment, on the decisions that genuinely deserve high attention.

Pure vibe coders are starting to talk about it openly. Not token cost, not technical debt. Fatigue. After six hours of prompt-result-re-prompt, the brain goes empty. Weak signals slip by. Vulnerabilities accumulate without anyone seeing them.

On the other side, developers who are asked to “just review” the code of an autonomous agent hate their daily work. Reading 500 lines they did not write, with no context on the decisions that were made, is one of the most thankless tasks in the job. So they rubber-stamp. And regressions get through.

The shared problem is neither cost nor tooling. It is the distribution of attention.

Three modes, three attention patterns

“Coding with AI” now covers three distinct approaches. They do not have the same cost, they do not have the same reliability, but above all, they do not impose the same cognitive effort on the developer.

Vibe coding means describing what you want in natural language, accepting generated code by trusting the result more than a line-by-line review, and iterating through successive attempts. Fast, intuitive, and cognitively exhausting over time.

Plan mode means asking the agent to first produce a structured plan (steps, files involved, risks) before executing anything. You validate the plan, then the agent implements. Slower, but attention is concentrated at the right moment.

The autonomous agent means an agent that plans, implements, tests, and fixes in a loop without human intervention at each step. You give the objective, you get the result back. Zero effort during execution, but all the effort lands in review.

| Vibe coding | Autonomous agent | Plan mode | |

|---|---|---|---|

| During generation | Diffuse and permanent | Zero (total delegation) | High but short (plan validation) |

| During implementation | Medium: “does this work?” | Zero | Low: passive monitoring |

| At review | Low (no formal review) | Cognitive wall (large diff without context) | Targeted (deviations from the plan, not all the code) |

| Main risk | Fatigue -> real problems slip through | Rubber-stamping -> cosmetic review | Depends on the quality of the initial plan |

| Total cognitive load | High and continuous | Low, then a brutal spike | Distributed and targeted |

Why vibe coding is exhausting

Cognitive psychology calls this the vigilance decrement: the human ability to detect weak signals in a continuous stream collapses over time, typically within 20 to 30 minutes. Recent work goes further: perfect vigilance is theoretically impossible. Prolonged vibe coding puts the developer exactly in that situation. A continuous stream where correct code and dangerous code look alike.

And when fatigue sets in, there is a stage many developers will recognize without wanting to say it out loud: the moment attention shuts down completely. The prompt-result-re-prompt cycle runs on variable reward, the same mechanism as slot machines. The unpredictability of the result maintains engagement while gradually reducing critical thinking. After a few hours, the developer is no longer really thinking. They rerun, accept, rerun. That is the point where vibe coding stops being a tool and becomes autopilot. And that is precisely where real errors settle in silently.

Vibe coders who talk about burnout are not fragile. They are running into biological constraints that discipline alone does not compensate for.

Why autonomous agent review is a trap

The effort has not disappeared with the autonomous agent. It has moved to the end.

The agent delivers a 300 to 800 line diff. The developer has no context on the micro-decisions made along the way. Cognitive psychology calls this extraneous load: complexity does not come from the task itself, but from the way information is presented. A CHI 2026 study measures the phenomenon: stress and fatigue increase with repeated use, even when productivity improves. The predictable result: either the developer spends two hours reverse-engineering the agent’s decisions, or they rubber-stamp, and regressions reach production.

There is a deeper problem too: when developers spend their time reviewing code generated by agents, they stop building things. We turn craftspeople into full-time quality controllers for code they did not choose to write. The pleasure of building disappears, motivation collapses, and the best people eventually leave.

Enhanced plan mode: distributing attention better, not removing it

Classic plan mode is already progress: it separates thinking from implementation. We can go further. But let us be clear: no workflow removes the problem. It only makes it more manageable.

This is the workflow I use day to day. It distributes attention across five phases, and it has its own limits, which I mention too.

Phase 1. Plan validation (high attention, no time limit). This is the most important phase of the whole workflow. The agent produces a structured plan: files involved, steps, identified risks. But validating does not mean reading and approving. It means iterating: asking questions, challenging choices, asking for alternatives, checking that edge cases are covered. Move on only when you are convinced that the plan covers the real scope, not just the scope the agent understood. Everything you let pass here, you will pay for in review. Limit: the quality of the plan depends on the quality of this exchange. A vague brief followed by a quick approval gives you a vague plan, and you only notice it at review time.

Phase 2. Implementation by the agent (low attention). The agent executes the plan. A task artifact is created and updated during implementation; it traces decisions and deviations from the plan. Limit: the artifact does not capture everything. The agent can still drift, and some drift is silent.

Phase 3. Functional review through the artifact (medium, targeted attention). The artifact gives you a synthetic view of what was done versus what was planned. You check deviations instead of rereading the whole diff. Limit: you still spend more time than expected catching deviations. It is better than a raw diff, but it is not free.

Phase 4. Final human review (high, targeted attention). You validate the critical points: security, data handling, architectural consistency. The plan and the artifact have filtered part of the noise. Your review covers less code, not zero code.

Phase 5. Automatic review agent on the PR (safety net). Before the push, a classic review agent checks the PR: conventions, known vulnerabilities, suspicious dependencies, test coverage. Whatever mode was used upstream, this step is not negotiable. The cost is negligible, the benefit systematic.

The result is not a perfect workflow. It is a workflow where high attention is concentrated on two moments (plan and critical review) instead of being diluted across the whole session. That alternation is what makes the work sustainable over time. Not the illusion that the agent gets everything right the first time.

Where to start

If you do vibe coding: timebox your sessions. Beyond 2-3 continuous hours, your vigilance drops. That is biological, not a discipline problem. Force breaks and review critical points (security, data) at the end of the session.

If you use an autonomous agent: do not review raw diffs. Require the agent to document its decisions as it implements. If your tool does not allow that, keep the agent limited to tasks small enough for the diff to remain readable (< 100 lines).

If you want to test enhanced plan mode: start by explicitly separating the planning phase from the implementation phase. The most impactful change is the first one: stop letting the agent decide and execute at the same time.

If you manage a team that codes with AI: stop measuring only velocity. “AI goes faster” means nothing if your developers spend eight hours a day reviewing generated code without ever building anything themselves. You will get rising productivity metrics and a team in silent burnout, followed by turnover you did not see coming. Measure review load too, the ratio of code written versus code reviewed, and regularly ask your developers whether they still feel like they are building something.

In every case: the question is not which tool you use. It is when you place your attention, and whether that moment is the right one.

AI does not replace developer judgment. It changes the moment when that judgment is requested, and each mode requests it differently. These are not individual weaknesses. They are biological constraints. A workflow that integrates them works with the brain rather than against it.

Resources

Scientific research

- When Help Hurts: Verification Load and Fatigue with AI Coding Assistants (CHI 2026). N=60 developer study measuring verification load with AI assistants.

- The Sustained Attention Paradox (Sharpe & Tyndall, Cognitive Science 2025). Why perfect vigilance is biologically impossible.

- CognitIDE: Capturing Developers’ Cognitive Load (Stolp et al., 2025). Physiological measurement of cognitive load during coding, debugging, and review.

Field reports and industry data

- Why Developers Using AI Are Working Longer Hours (Scientific American, March 2026). AI removes the natural speed limits of development.

- Agentic Fatigue Meets Vibe Coding (explainx.ai, April 2026). The productivity/fatigue paradox, with Ramp data.

- AI Writes Better Code. We’re Getting Worse at Reviewing It. (Atomic Robot, February 2026). The aviation analogy applied to AI code review.

- Harness State of DevOps 2026 (700-engineer survey). AI accelerates code, while burnout increases.

Theoretical foundations

- Sweller, J. (1988) Cognitive Load Theory: intrinsic / extraneous / germane load.

- Skinner, B.F. (1953) Variable ratio reinforcement schedules.

- Baumeister, R.F. (2008) Decision fatigue.

AiBrain

AiBrain