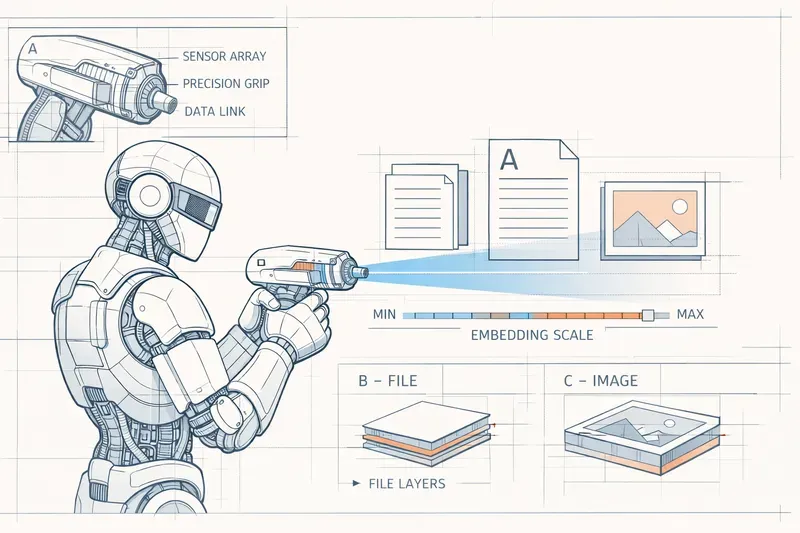

Embeddings are not just for retrieving the right chunk in a RAG pipeline. They are also a way to give your data a geography.

Executive Summary

Embeddings are usually reduced to a single use case: semantic search in a RAG pipeline. Useful, yes. Complete, no.

Their real value is elsewhere: they turn text into positions in a space of meaning. From there, you can measure proximity, detect groups, track movement, and reveal structures that a simple keyword system cannot see.

This article walks through five use cases that are easy to prototype:

- detecting topical drift in generated content

- mapping skills beyond exact keywords

- surfacing the real themes in customer support

- finding semantic duplicates in a knowledge base

- grouping error logs into incident families automatically

The central idea is simple: an embedding is not just a retrieval key. It is a usable representation for analyzing the latent structure of a corpus.

When people talk about embeddings, the conversation almost always follows the same pattern: encode documents, search by similarity, inject the retrieved passages into the prompt. Fine. That has become the default grammar of RAG.

But reducing embeddings to that role misses their real power. An embedding does not only say “this text looks like that text.” It places a piece of content in a space where distance, density, clusters, and trajectories become exploitable signals.

Put differently: embeddings are not just for search. They are for mapping.

Here are five practical uses, all realistic to prototype in an afternoon, that make this idea concrete.

1. Detecting Topical Drift in Generated Content

The Problem

You have an agent writing an article, a report, or a long-form answer. Across iterations, the text stays fluent, well written, sometimes even more elegant than the initial draft, yet it gradually drifts away from the requested topic.

This kind of drift is hard to catch with simple rules. The text can stay lexically correct while still losing the intent of the original brief.

The Idea

Embed the initial brief, then embed each generated version. Measure cosine similarity between the brief and every output.

If the score drops below a defined threshold, you treat the content as out of scope.

brief <- "Technical article on PostgreSQL query optimization"

version1 <- "B-tree indexes can accelerate SELECT queries..."

version2 <- "PostgreSQL is an open-source DBMS created at Berkeley..."

version3 <- "NoSQL databases like MongoDB offer an alternative..."

emb_brief <- embed(brief)

for each version in [version1, version2, version3]:

score <- cosine_similarity(emb_brief, embed(version))

# Example result

# version1 -> 0.87 : still on topic

# version2 -> 0.72 : mild drift

# version3 -> 0.54 : clear driftWhy It Helps

This gives you a semantic guardrail inside a generation loop. It is not a quality score. It is an alignment score.

In practice, this is useful for:

- monitoring a writing agent

- checking whether a summary is drifting toward background context instead of the core point

- detecting when a multi-step chain is losing the original instruction

2. Mapping Skills Without Getting Trapped by Keywords

The Problem

CV-to-job matching based on keywords breaks down as soon as the wording changes. A candidate can have the right capabilities without using the exact expected terms.

The Idea

Embed skill blocks, project descriptions, or experience summaries, then project the vectors into 2D with UMAP or t-SNE.

The resulting proximities and clusters reveal families of profiles even when the vocabulary differs.

for each cv in candidates:

cv_embeddings[] <- embed(cv.skills)

job_embedding <- embed(job_description)

all_vectors <- cv_embeddings + [job_embedding]

projection_2D <- UMAP(all_vectors, dimensions=2)

display scatter plot:

- blue dots : candidates

- red star : job descriptionWhat Changes

You move from word-to-word matching to profile proximity.

For example, a candidate who talks about “data pipelines,” “feature engineering,” and “model evaluation” may end up very close to a “machine learning” job even without using that exact label.

This is not an automated hiring decision. It is a way to inspect a talent pool more intelligently.

3. Clustering Support Tickets Without a Predefined Taxonomy

The Problem

Manual categories such as “bug,” “question,” or “feature request” are too broad. They help with sorting, but they do not explain what is actually happening.

When 500 tickets arrive every week, what you want is not a vague label. You want recurring themes, product friction points, and weak signals.

The Idea

Embed the tickets, run an unsupervised clustering algorithm such as HDBSCAN, then ask an LLM to name each cluster based on a few representative examples.

for each ticket in support_tickets:

embeddings[] <- embed(ticket.content)

clusters <- HDBSCAN(embeddings, min_cluster_size=10)

for each cluster:

samples <- take_5_representative_tickets(cluster)

theme <- LLM("Propose a short name for this group", samples)Expected Result

Instead of three generic classes, you get operational themes such as:

- “mobile sync issues after update”

- “payment failure on Safari iOS”

- “UX confusion around the cancel button”

That difference matters because these themes are directly actionable for product and support teams.

4. Spotting Semantic Duplicates in a Knowledge Base

The Problem

In a knowledge base that has grown for months or years, duplicates are almost never strictly identical. They often say the same thing with different wording, sometimes with contradictory details.

Full-text search catches the obvious duplicates. It often misses the ones that overlap heavily without sharing the same exact wording.

The Idea

Embed every article, then build a similarity matrix and extract all pairs above a threshold.

articles <- load_knowledge_base()

for each article:

embeddings[article.title] <- embed(article.content)

duplicates <- []

for each pair (article_A, article_B):

sim <- cosine_similarity(embeddings[A], embeddings[B])

if sim > 0.85:

duplicates.add(article_A, article_B, sim)Why It Works

You get a prioritized list of content that should be merged or cleaned up:

- two guides explaining the same onboarding flow

- two VPN procedures written for different audiences

- two runbook entries describing the same incident under different titles

An LLM can help produce a merged version afterward, but the key step is identifying the overlap in the first place.

5. Surfacing Incident Families in Logs

The Problem

Traditional log tooling is good at finding what you already know: a regex, an error code, an expected pattern.

But new, rare, or diffuse incidents are easy to miss because they do not yet have a stable signature.

The Idea

Embed error messages, then cluster them to reveal semantically related groups even when the exact phrasing varies.

logs <- load_error_logs("app.log", level="ERROR", last_24h)

for each log:

embeddings[] <- embed(log.message)

clusters <- HDBSCAN(embeddings, min_cluster_size=5)

for each cluster:

samples <- take_5_representative_logs(cluster)

cause <- LLM("Summarize the probable root cause", samples)What This Changes

You are no longer just counting known errors. You are surfacing families of incidents.

For example:

- “Redis pool saturation under load”

- “access to a null profile after account deletion”

- “intermittent timeouts toward the payment service”

The gain is twofold: you detect new issues faster, and you save time during incident qualification.

The Common Thread: Embeddings Are a Geography

If you keep only one idea, make it this one: an embedding turns content into coordinates in a space of meaning.

From that point on, geometric operations become analytical operations:

- distance measures semantic proximity

- clustering reveals recurring themes

- projection exposes the hidden structure of a corpus

- trajectory shows drift or evolution

- dense neighborhoods point to families of similar cases

Semantic search is only one use case among many. An important one, but still only one.

The real mindset shift is to stop seeing embeddings as a retrieval API and start seeing them as an observation layer for your data.

Once you do that, you stop merely searching for the right text. You start understanding the shape of your corpus.

AiBrain

AiBrain