Critical reading of the paper HyperAgents (Zhang et al., March 2026) arXiv: https://arxiv.org/pdf/2603.19461

Executive Summary

Observation: The HyperAgents paper can make it look like we finally have a general architecture for self-improving agents. In practice, that is not where its main value lies.

Core idea: HyperAgents should be read as an exploration machine. The system is useful because it lets useful agent engineering mechanisms emerge through repeated trials, mechanisms that can later be reimplemented in simpler, more stable, and more auditable systems.

Why this is not a production architecture: A continuous self-modification loop remains expensive, hard to audit, and difficult to justify in industrial settings where readability, robustness, and cost control matter most.

What the paper really contributes: It shows that reusable mechanisms can emerge without being explicitly designed in advance, including persistent memory, performance tracking, structured evaluation pipelines, and compute-aware strategies.

Practical reading: The right workflow is not “deploy HyperAgents as is,” but rather:

- explore inside a bounded setup,

- extract the stable patterns that consistently improve results,

- freeze those patterns into a classical production agent.

Glossary

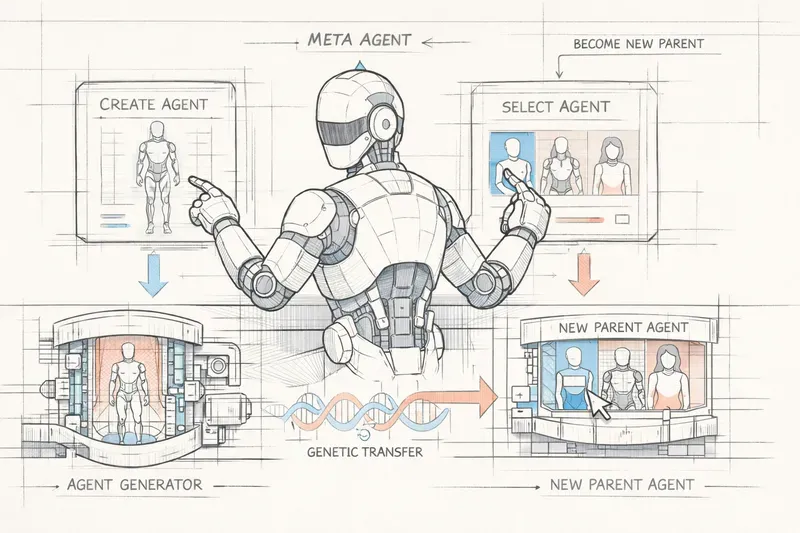

- Hyperagent

- a program that contains both a task agent and a meta agent responsible for generating the next modifications.

- Task agent

- the part of the system that directly performs the target task, such as solving a problem, grading a proof, or writing a review.

- Meta agent

- the component that inspects the current system, proposes changes, and generates the next agent version.

- Self-modification

- the ability of a system to modify its own program or execution strategy.

- Metacognitive self-modification

- the ability of a system to improve not only how it solves a task, but also how it produces its own improvements.

- Archive

- the collection of agent variants generated and preserved across iterations, used to maintain diversity and continuity in exploration.

- Open-ended exploration

- an improvement process without a predefined target form, where the system freely explores variants that may lead to unexpected mechanisms.

- Cross-domain transfer

- the ability to reuse mechanisms learned in one domain inside a different domain.

- Agent engineering pattern

- a reusable mechanism in agent design, such as persistent memory, a performance tracker, or a multi-stage evaluation pipeline.

- Frozen artifact

- a stabilized and reimplemented version of a mechanism discovered during exploration, intended for production use.

A very promising paper has just been released: HyperAgents.

At first glance, you might think: this is it, self-improving agents are here. Or, on the contrary, you might dismiss it immediately: yet another lab demo with little practical value.

My reading is different.

I do not think this paper is most interesting as a target production architecture. I find it much more interesting as an exploration machine: a way to make agent engineering patterns emerge, so they can later be frozen into more robust systems.

That is the distinction I want to develop here, from the perspective of someone who spends as much time running agents in production as reading papers about them.

What the paper proposes

The starting problem

The Darwin Godel Machine (DGM), the direct predecessor, already showed that a coding agent could improve itself in an open-ended way: it generates variants of itself, evaluates them on a benchmark, archives the best ones, and uses them as the starting point for the next generation. This works well in coding because the task itself, writing code, is aligned with the self-modification capability, modifying code. Better coding also means better self-modification.

Outside coding, that alignment disappears. An agent that gets better at grading mathematical proofs does not automatically become better at modifying its own source code. The instruction-generation mechanism in DGM, the procedure that decides what to improve, remains fixed and handcrafted. That is the bottleneck.

The proposed solution: make the meta agent editable too

The authors introduce the concept of an hyperagent: a single program containing both a task agent and a meta agent. The key point is that the meta agent is itself inside the editable program. It can therefore rewrite itself.

What this changes concretely is that the system can improve not only how it solves a task, but also how it generates its own improvements. The authors call this metacognitive self-modification.

The loop in practice

The system starts from a deliberately minimal hyperagent: the task agent makes a single LLM call, while the meta agent receives a simple instruction telling it to modify whatever it wants in the repository.

At each iteration:

- a parent hyperagent is selected from the archive in proportion to its performance,

- its meta agent runs, inspects the repository and past results, then generates a modified version,

- the new hyperagent is empirically evaluated on domain tasks,

- if it compiles and produces a valid result, it is added to the archive.

The archive grows while preserving a diversity of variants, some higher performing, others useful as intermediate stepping stones. Selection is biased toward strong agents with few children in order to preserve exploration.

The results

The experiments span four domains: coding (Polyglot), ML paper peer review, reward design in robotics (Genesis simulator), and IMO proof grading.

In every domain, DGM-H significantly improves performance relative to the initial agent and to baselines without self-improvement or without open-ended exploration.

The most striking result is cross-domain transfer: a hyperagent trained on paper review and robotics is transferred without modification to IMO grading, a very different domain. After 50 additional iterations starting from that transferred agent, the system reaches a gain of 0.630, whereas the initial agent and transferred DGM-custom agents remain at 0.0.

Why I do not read it as a production architecture

For real-world systems, reliability depends on more frozen setups: readable, predictable, auditable systems. A continuous self-modification loop in production is hard to defend as a target architecture.

There is also a direct economic argument. In Appendix D.2, the authors report tens of millions of tokens at the scale of a full experimental program: self-modification plus evaluation, over 100 iterations, with 5 repeated runs per condition. That is not a viable continuous loop for most industrial use cases.

But stopping there would be a mistake.

What DGM-H actually makes emerge

To me, the most valuable part of the paper is what the system produces over generations.

The paper shows a progressive rise in structure, from superficial prompt tweaks to genuinely reusable mechanisms (Appendix E.3):

- PerformanceTracker: moving average, trend detection, cross-generation comparison

- Persistent memory: synthesized insights, causal hypotheses, cross-iteration forward plans

- Automatic bias detection: identifying classification collapse and correcting it through thresholds

- Structured evaluation pipelines: explicit two-stage evaluation, checklists, decision trees

- Compute-aware planning: adapting strategy to the remaining compute budget

None of these mechanisms were explicitly requested. The system discovered them while searching for better performance. That is precisely why they matter: they are the product of expensive exploration, but they can then be frozen at near-zero cost inside a classical system.

Dynamic systems are for exploration. Frozen systems are for reliability.

What the transfer results confirm

The transfer experiments point in exactly the same direction.

What survives from one domain to another is not domain knowledge. It is meta-level machinery: memory, tracking, and more structured self-modification procedures.

BetterGrader does not discover “rubrics are useful” in some abstract general sense. It discovers a calibration better suited to the IMO domain, with a score distribution that matches human annotations better than ProofAutoGrader. That is emergent specialization, and it can later be crystallized.

DGM custom, the version with manual instruction-generation for each domain, remains competitive on raw results. But it requires human engineering for every new domain. DGM-H produces specialization without that intervention. The difference is therefore not only about performance, but also about engineering cost.

What I would do with it in practice

Read as an exploration machine, DGM-H suggests a three-step workflow:

- Explore: run a bounded DGM-H campaign with a defined token budget, a precise domain, and a strict sandbox, in order to make agent engineering patterns emerge.

- Extract: identify the mechanisms that survived across multiple generations and improved results in a stable way.

- Freeze: reimplement those mechanisms inside a classical, static, auditable agent.

This workflow has an exploration cost that can be justified as an R&D investment, while producing a production artifact that remains maintainable.

The practical question the paper leaves open, and the one that interests me most, is monitoring: how do you detect that a frozen artifact is drifting over time, and when do you relaunch a new exploration campaign?

The professional shift

This may be the most important conclusion.

If this kind of approach becomes widespread, the work of an agent engineer will look less like “writing behaviors” and more like:

- defining the exploration frame and its constraints,

- designing robust evaluation criteria,

- deciding when a result deserves to be crystallized,

- monitoring the frozen artifact over time.

The paper does not draw that conclusion explicitly. And yet it seems to me like the most actionable one for anyone building agentic systems today.

We explore to learn, we freeze to deploy.

AiBrain

AiBrain