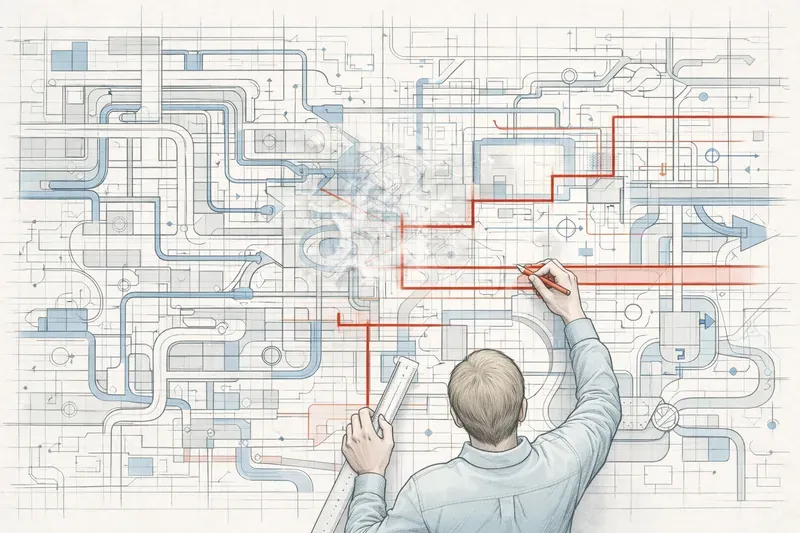

A field report on how I am now untangling a multi-agent framework launched too quickly in the euphoria of vibe coding 2025, and why I think the right question is no longer “does it work?”

The moment I realized I was no longer holding the wheel

A few months ago, I launched a multi-agent, multi-client framework. Frontend, API, core, orchestrator, agents, registry, everything was moving at a speed I had never seen before in twenty-five years of software work. Agents were generating routes, rewriting contracts, and proposing abstractions. CI stayed green. Demos worked. Like many people in 2025, I was riding the euphoria of vibe coding.

Then one day, I wanted to add one more parameter to the start of a run. A trivial change. And I hesitated.

Not because the code was messy. It was clean. Not because the tests were failing. They were passing. I hesitated because I was no longer sure where that parameter belonged. In the agent schema? In the API contract? In the registry? In a repurposed HIL (human-in-the-loop, the moments when the system pauses to ask something from the user)? Every option looked defensible. None felt obviously right. I realized I no longer really knew which boundaries my system was supposed to preserve.

It was not a bug. It was a different kind of debt.

Comprehension debt: the other debt, the one that does not signal itself

Addy Osmani recently gave it a name in his article Comprehension Debt - the hidden cost of AI generated code: comprehension debt. It is the gap between the amount of code your system contains and the amount any human being actually understands about what is going on inside it.

Why it is more dangerous than technical debt

It does not look like technical debt. You can feel technical debt: slow builds, tangled dependencies, that low-level fear every time you touch that module. You usually know roughly where it lives, and you can plan to pay it back.

Comprehension debt gives no warning. The code stays readable, the tests stay green, and reviews keep moving. But nobody really knows anymore why a given decision was made, or what will happen when it changes. The system’s theory evaporates silently while its weight keeps increasing.

The speed asymmetry as a new terrain

The asymmetry is fundamental: AI produces code faster than I can audit it. Historically, human review was a bottleneck, but a productive bottleneck. Reading a pull request forced understanding. That bottleneck is now gone, and tests do not compensate for it. They only validate what we were explicit enough to specify.

People talk a lot about how quickly AI lets us produce code. Not enough about how quickly it can produce architectural drift.

Where the boundaries became blurry

When I went back to that framework, I did not see a system that had “gone wrong.” I saw a system whose boundaries had become less sharp.

This was not negligence. It was design ambiguity: multiple locally reasonable choices that were making the exact role of each layer less and less obvious.

1. The agent/orchestrator boundary had become less clear

At the beginning I had two primitives in mind: an agent that knows how to do one precise thing well, a structured LLM call with its tools and prompt, and an orchestrator that describes a workflow, sequences of steps, branching, retries, and parallel execution. Over successive assisted generations, some agents started reading the orchestrator context directly. Others started making routing decisions. The atomic execution unit and the workflow had started to overlap. Every overlap saved an interface. Every overlap made the system less testable and less composable.

2. Launch parameters no longer had an obvious entry point

Each orchestrator expected its inputs through local convention: one field here, a string there, an object elsewhere. The registry no longer knew what it was supposed to expose. The frontend was rendering hand-made forms. The API was validating weakly. Nothing was technically wrong. Nothing was structurally solid either.

3. HIL had grown into an ambiguous surface

HIL was supposed to serve human interventions during execution, but it had started being used to capture startup inputs as well. This is exactly the kind of “practical” reuse AI happily suggests: instead of introducing a new mechanism, stretch an existing one. But HIL was designed for a specific use case, and covering every input diluted its semantics.

Each of these points had been produced cleanly, with tests, and without debate in review. None of them was an obvious mistake. But taken together, they matched exactly what Osmani describes: a system whose trajectory becomes less controlled while CI remains green.

ADRs as a tool of technical sovereignty

That is when I started taking ADRs (Architecture Decision Records) seriously. Not as a documentation exercise. As a tool of technical sovereignty.

Because ultimately, the right question is not: “does the code work?” The right question becomes:

“Does this change modify a structuring decision?”

And just asking that question is already valuable.

The five criteria that should trigger an ADR

A change probably deserves an ADR if it:

- touches a system boundary,

- changes an invariant,

- introduces a durable dependency,

- changes an interface contract,

- or moves a central piece of logic.

What an ADR forces you to make explicit

An ADR forces you to state five things we rarely spell out to ourselves while coding: the context in which the decision exists, the decision itself, the rejected alternatives, the accepted consequences, and above all the invariants we want to protect.

Code says what was done. Tests say what we verify. An ADR says why this direction was chosen instead of another one, and what we no longer allow ourselves to break.

In a world where AI generates quickly, abundantly, and sometimes too easily, that layer becomes essential. Not because documentation suddenly matters more than before. Because the pace of production has made it impossible to control trajectory through line-by-line review alone. We do not keep control of a system by rereading every line. We keep control by maintaining a shared understanding of the decisions shaping its trajectory.

The hesitation test

One thing I discovered after adopting this practice was surprisingly counterintuitive:

Simply asking “does this change deserve an ADR?” is already a powerful signal, regardless of the answer.

That question deliberately introduces friction. It slows the gesture down. When I ask it and I am not immediately sure, the hesitation itself is a sign that something structurally important may be moving.

Three forms of hesitation I have learned not to ignore:

The minimization. When I tell myself “this is just a small adjustment” on a component that is actually central. The change may be trivial in lines of code, but not in consequences.

The need for social validation. When I feel that I would want to discuss this with my team before merging, even vaguely. That instinct is often the instinct that an invariant is moving.

The unnamed alternative. When I am not sure I can explain in two sentences why I am making this change instead of a credible alternative. If the alternative deserves to be named, the choice deserves to be recorded.

Friction as a resource

In an environment where AI makes everything too fluid, friction is a resource. This runs against the spirit of the times. For the past ten years, the industry has elevated zero friction into a cardinal virtue: continuous deployment, one-click onboarding, IDEs that autocomplete before we have even fully formed our intent. We have confused useless friction such as endless forms and arbitrary approvals, with productive friction, the few seconds in which we ask whether we are making the right decision. By removing both at once, AI has made visible what the second one was giving us.

If you have no friction, you are not designing. You are submitting to generation.

Friction is not a bug in the process. It is the sign that a decision is being made. Removing it entirely means delegating design without even realizing that you have delegated it.

Asking the question is already a way of taking back control over the rhythm imposed by the tool. It restores the pedagogical bottleneck that the speed asymmetry had erased, but at a more useful level: the level of the decision, not the line.

Anatomy of an ADR that protects trajectory

I now work with a lightweight format inspired by Michael Nygard. Nothing heavy. A markdown file of 80 to 200 lines. A status, a date, a scope, and a structure that forces answers to the questions we would otherwise avoid.

Scope is often the field that has surprised me most. Listing the impacted packages and boundaries forces you to ask where the decision actually applies. In a multi-agent, multi-client system like mine, that can be half the analytical work.

To make this concrete, here is an ADR I wrote a few days ago. It is deliberately one of the foundational ones in my project, and it is retroactive. It documents a boundary that should have been made explicit much earlier. If I had to keep only one example of what I mean by “untangling,” it would be this one.

ADR-000 - Agent vs Orchestrator

- Status:

accepted - Date:

2026-02-20 - Scope:

core

Context

The project relies on two important notions: agent and orchestrator.

In the codebase, the difference between them already exists, but it was never clearly stated. That creates recurring questions:

- where should a new piece of logic go?

- can an agent drive a workflow?

- what should be exposed to the API?

- who decides the order of steps?

This ADR establishes a shared vocabulary to remove ambiguity.

Decision

Agent

An agent represents a specific capability.

Its role is to execute a targeted task, for example:

- building a prompt,

- calling an LLM,

- calling tools,

- parsing a response,

- validating a structured result.

An agent does not decide the overall workflow. It knows how to do one thing, but it does not decide when or why that thing should happen.

In short:

An agent knows how to do X.

Orchestrator

An orchestrator represents a complete workflow.

Its role is to organize the steps of a run:

- choosing which agent to call,

- deciding the order of steps,

- handling conditions,

- retrying on error,

- requesting human validation,

- producing a final output.

It is also the orchestrator that is exposed outward to the API, the registry, and the UI.

In short:

An orchestrator decides when, in what order, and under what conditions X, Y, or Z happen.

Separation rule

The rule is simple:

The agent carries a capability.

The orchestrator carries an intention.

In other words:

What belongs in an agent:

- LLM logic,

- local transformation or validation,

- execution of a specialized task.

What belongs in an orchestrator:

- step sequencing,

- branching,

- conditions,

- error handling,

- human interaction,

- parallelization.

Alternatives considered

-

Use only one concept, “agent” Rejected, because it mixes execution and orchestration in the same object.

-

Do workflow without a separate agent concept Rejected, because it removes reusability and weakens responsibility boundaries.

-

Let agents call one another Rejected, because the workflow becomes implicit, which makes it harder to understand, observe, and maintain.

Consequences

- Every LLM call must live inside an agent.

- Every workflow decision must live inside an orchestrator.

- Agents remain reusable and testable in isolation.

- Orchestrators become the executable unit exposed to the rest of the system.

- The separation of responsibilities becomes easier to read in the code.

Code impact

packages/core/src/orchestrator/types.tspackages/core/src/orchestrator/orchestrator-engine.tspackages/core/src/orchestrator/orchestrator.tsapps/client-intent/src/domain/**/agents/apps/client-intent/src/domain/**/orchestrators/apps/client-nbi/src/domain/**/agents/apps/client-nbi/src/domain/**/orchestrators/

Documentation to maintain

- Subsequent ADRs rely on this distinction.

- This document serves as a reference whenever there is hesitation about where a piece of logic belongs.

This ADR contains almost nothing that a careful human could not have inferred by reading the code. And yet, without it, every logic-placement decision becomes negotiable again in every PR. With it, the question “does this belong in the agent or in the orchestrator?” has a stable, shareable, defensible answer. Future drifts become detectable because we can now say: this change violates ADR-000.

That is exactly what I mean by protecting trajectory.

Untangling in practice

Today I work in two parallel movements.

1. Retroactive ADRs

For decisions that had been made implicitly, through an accumulation of small, locally reasonable commits. ADR-000 is one of them. This work is a little ungrateful: you document the past, and sometimes have to admit that a decision you wanted to be clear was in fact made by default. But it immediately reveals the places where the documented decision and the actual code no longer match. Each gap becomes a targeted refactoring task instead of a diffuse discomfort.

2. The rule of “ADR first, not after”

For every new structuring change. If I feel the slightest hesitation, I write the ADR before opening my editor. That takes thirty minutes. It saves weeks of silent drift. And incidentally, it gives me a much cleaner prompt for AI when I later ask it to implement the change: an ADR is a compact spec that has already exhausted the hard part of the thinking.

Rhythm matters more than volume. I do not document everything. I document what structures the system. My ADR folder now has around fifteen files in a project that would have hundreds if I tried to “cover” every decision. It is not a library. It is a skeleton.

AI is pushing us toward heavier problems

There is one last thing I did not anticipate, and it makes all this ADR work even more critical than it first appears.

AI did not save me time on the things I used to do before. It pushed me to take on problems I would not have tackled three years ago. A multi-agent, multi-client framework with orchestration, registry, HIL, checkpoints, and shared front/API contracts is not a project I would have launched alone in 2022. The complexity would have been out of reach for one person, or even a small team.

This is not specific to me. It is a general movement. The projects coming out over the last eighteen months are structurally more ambitious. The technical entry ticket has shifted. What we used to consider “a big system” has become baseline, and we are now attacking architectures that used to belong to enterprise R&D.

And that is where the trap closes. AI gives us the ability to produce code for these more complex systems. It does not automatically give us the ability to hold their design together. The problems have become more fundamental: boundaries between subsystems, inter-service contracts, cross-cutting invariants, state propagation. Exactly the class of problems that is not solved line by line, and that tests alone cannot protect.

The more ambitious the systems we allow ourselves to build, the more expensive comprehension debt becomes to ignore. It is not a coincidence that the wake-up call is happening now: there was no sudden collapse in our practices, only a silent rise in the level of complexity we now allow ourselves to confront.

ADRs do not document the code. They protect trajectory.

If I had to summarize what this year has taught me in one sentence, it would be this:

In a world where producing code becomes too easy, sovereignty moves toward explicit decision-making.

This is a shift, not a loss. AI is not taking technical control away from us. It is making obsolete the places where we thought we were exercising it.

It was not in review, because we cannot review faster than it can produce. It was not in tests, because we cannot test what we did not think to specify. It was not in exhaustive specs either, because a spec detailed enough to describe a program is the program, just written in a non-executable language. All of these artifacts remain necessary. None of them is enough anymore to hold the trajectory of a system.

What remains, and what becomes the scarce resource, is the ability to decide explicitly what structures the system, to name the invariants we want to protect, and to record the rejected alternatives. In short, to make decisions that are not absorbed by the flow of generation.

ADRs are the simplest and most robust artifact I have found to materialize that shift. They cost little. They survive refactors. They resist turnover. They impose friction at exactly the right place: where a structuring decision is being made without us fully noticing it. In a world where code becomes almost free to produce, that is where technical sovereignty relocates.

ADRs do not document the code. They protect trajectory.

AiBrain

AiBrain