Executive Summary

Observation: DeepSeek does not stand out only through performance, but through its ability to turn architectural ideas into production components.

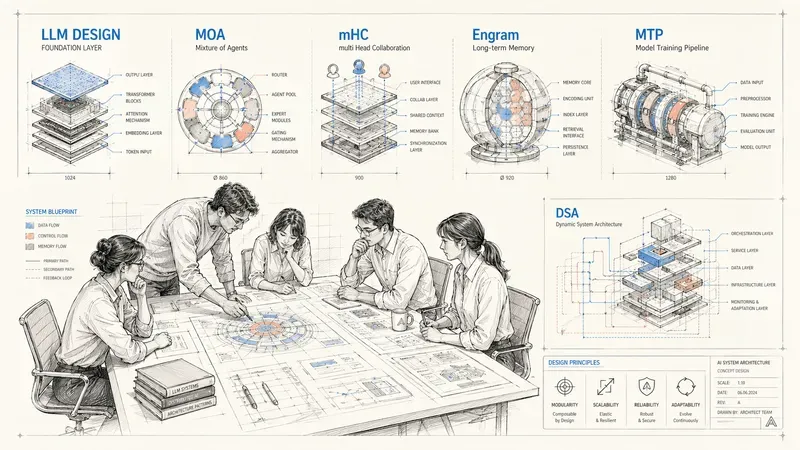

Thesis: V4 interests me less as a future benchmark score than as the possible synthesis of a coherent architectural program: MoE for compute, MLA and DSA for attention, mHC for depth, Engram for memory.

Critical point: these gains make models more efficient, but they also shift value toward an execution stack that is more specialized, more complex to implement, and therefore less accessible.

What to watch: V4’s technical report will matter more than the benchmarks. The real question is whether DeepSeek changes how future LLMs are built, not only whether it runs faster.

Glossary

- MoE (Mixture of Experts)

- : architecture where only part of the parameters is activated for each token, increasing total capacity without paying the full compute cost at every inference step.

- MLA (Multi-head Latent Attention)

- : attention variant that compresses keys and values into a latent space to drastically reduce the KV cache.

- KV cache

- : inference memory used to store keys and values already computed by attention, so they do not need to be recomputed for every token.

- DSA (DeepSeek Sparse Attention)

- : sparse attention mechanism that dynamically selects important tokens instead of applying dense attention everywhere.

- mHC (Manifold-Constrained Hyper-Connections)

- : multi-path approach that constrains how streams mix to stabilize the signal and gradient in very deep models.

- Engram

- : conditional static memory that lets the model retrieve certain patterns or facts through fast lookup instead of mobilizing the whole neural network.

- Gradient

- : mathematical signal that indicates how to adjust the model weights during training.

- Backpropagation

- : step where the gradient flows backward through the network layers to correct the weights after a prediction.

DeepSeek V4: the lab drawing the blueprints for future LLMs

The chief architects

DeepSeek V4 is the model I am waiting for most in 2026. Not because it will be the “best” on this or that leaderboard; that race interests me less and less. What interests me about DeepSeek is that they have become the chief architects of the current LLM era.

In a landscape where most labs scale through brute force (more data, more GPUs, more parameters), DeepSeek takes the opposite path: how do we get the same result with less? And each time, the answer comes through structural innovation, not a larger checkbook.

Let me be precise about what I am saying, and what I am not saying. OpenAI and Anthropic remain the masters of content: data curation, post-training, alignment. DeepSeek optimizes the container, meaning the architecture itself.

Having the best plans does not guarantee the best building. But in this industry, good plans eventually get copied. And on that terrain, DeepSeek has become hard to ignore.

The track record: blueprints the rest of the industry will copy

I do not trust DeepSeek out of enthusiasm.

I trust them for a simpler reason: their papers become products.

MoE: With V2 and then V3, they showed that a model could activate only 37 billion parameters out of 671 billion total, while reaching frontier-level performance for a training cost below 6 million dollars. MoE existed before them. But DeepSeek played a major role in bringing it back to the center of the frontier race. Today, in Llama 4 Scout, Gemma, or Qwen, MoE has returned to the core of frontier competition.

MLA: Multi-head Latent Attention compresses keys and values into a latent space, drastically reducing the KV cache. FlashMLA has more than 12,600 GitHub stars in April 2026. This is no longer a theoretical idea: it has become an infrastructure component adopted well beyond the DeepSeek ecosystem.

Multi-Token Prediction (MTP): Meta published the concept in 2024. DeepSeek put it into large-scale production in V3, with sequential modules and speculative decoding. The result: concrete and measurable inference gains on frontier models.

DSA: A content-aware sparse attention mechanism: a lightweight FP8 indexer scans tokens and dynamically selects the ones that deserve dense attention. Routine tokens move to sparse mode; tokens that require deeper reasoning keep full attention. The result: context processed 3x faster, API costs cut in half on long sequences, and preserved output quality. DSA is already deployed in V3.2.

The pattern is always the same:

paper -> optimized CUDA kernels -> next model integrates it.

- MoE -> V2

- MLA -> V2/V3

- DSA -> V3.2

The gap between research and production is measured in months, not years. That is what makes their publications worth following.

Two signals that say something about V4

To be clear: nothing officially confirms that these ideas will be in V4. There is no technical report at this point.

But their timing (January 2026), their nature, and their coherence with DeepSeek’s previous work make them credible signals. Even if they are not integrated, they say something about the direction DeepSeek wants to take.

mHC: keeping several paths without distorting the message

The problem. In a very deep neural network, the point is not just to stack more layers. The real problem is what that depth makes fragile during training.

At each step, the model performs two opposite movements. First, the signal flows forward (forward pass): tokens go through the layers, and each layer transforms their representation. Then the gradient flows backward (backpropagation) to correct the weights.

When the model becomes very deep, both paths become fragile. The gradient has to cross the entire depth of the network: it can vanish, explode, or become too noisy to guide learning correctly. And the signal itself is transformed layer after layer. After one hundred, two hundred, or three hundred successive transformations, it is no longer obvious that what arrives at the bottom still resembles useful information.

So the training-side symptom is not simply that “deep layers learn badly.” The issue is more precise: the passage of the signal and the gradient through the full depth becomes unstable. The result: slower convergence, more fragile training, or even complete divergence.

The first solution. Residual connections (ResNet, 2016) introduced a simple idea: keep a direct path for the signal. Each layer adds its contribution instead of fully replacing the previous signal. This is the famous $y = f(x) + x$ that made deep transformers possible.

The new problem. To enrich representations, some models, with ByteDance leading the way through Hyper-Connections in 2024, introduced several parallel paths. The idea is sound: multiple streams can carry different “versions” of the information and reduce redundancy between layers. But without constraints, these streams mix freely and stability is lost. Gradients explode or vanish.

The mHC idea. Keep several paths, but constrain how they mix.

In other words: streams can coexist and enrich one another, but they are not allowed to recombine arbitrarily. At each layer, their interaction is projected onto a mathematical manifold, concretely the Birkhoff polytope via Sinkhorn-Knopp, which guarantees conservation of the total information. The identity mapping is preserved, even with multiple streams.

So this is neither a simple direct line nor a chaos of paths, but a multi-path network with strict traffic rules.

Why it matters. At very large scale, the problem is not only adding capacity. It is making sure information remains usable after hundreds of layers. mHC tries to obtain the benefits of multi-path routing (more diversity, less redundancy) without losing the fundamental stability of residual connections. The paper, tested up to 27B parameters, shows exceptional training stability with only 6 to 7% overhead. And above all: it is co-signed by Liang Wenfeng, DeepSeek’s CEO. When the CEO signs an architecture paper, that is not trivial.

Engram: stop using the whole model to remember a simple fact

The problem. In a classic transformer, memory and reasoning are completely entangled. The model mobilizes all of its neural capacity both to recall “Paris is the capital of France” and to solve a multi-step math problem. It is powerful, but costly for static knowledge.

The idea. Separate the two.

Engram introduces a dedicated static memory, able to quickly retrieve recurring patterns or knowledge while the neural network focuses on compositional reasoning.

The intuition is almost provocative in 2026: a return to N-grams (2-gram, 3-gram), but modernized. Short input sequences are hashed into giant embedding tables, then the result is fused with the hidden state through a learned gate. Lookup is $O(1)$, deterministic, and most importantly: the tables can be offloaded to CPU RAM instead of saturating GPU VRAM.

The key point. The paper shows a U-shaped scaling law: there is an optimal balance between static memory (Engram) and dynamic computation (MoE + attention). Too much static memory and the model loses reasoning flexibility. Too little and compute is wasted on trivial pattern matching.

The mechanistic analysis is the most interesting part: Engram “relieves the early layers from reconstructing static patterns,” which reserves the model’s effective depth for what transformers do best: multi-step reasoning. It is a strong hypothesis, but it fits DeepSeek’s philosophy perfectly.

The uncomfortable questions

Container vs content

DeepSeek optimizes architecture like few actors today. But the real performance of frontier models still depends massively on post-training, data curation, and alignment. A brilliant blueprint is not enough without high-quality materials. OpenAI and Anthropic still have an edge on that terrain.

Every architectural gain closes the infrastructure a little more

MLA, DSA, mHC, Engram: all these approaches require specialized CUDA kernels. FlashMLA is living proof.

As DeepSeek improves the architecture, it also reduces the neutrality of the infrastructure. Every performance gain shifts value toward an execution stack that is more complex to implement and therefore less accessible. That is the paradox: optimizing the shape of the model can make adoption harder for the rest of the ecosystem.

The real test: does sparsity hold under long reasoning?

This may be the deepest question. Sparse models are ultra-efficient. But are they equally robust on:

- very long chains of thought,

- complex multi-step reasoning,

- coherence over 50k+ tokens?

If sparsity introduces instabilities at that level, then the efficiency gain may be paid for exactly where we expect the most from frontier models.

What the whole picture says

What stands out about DeepSeek is the systemic coherence of their innovations. Each idea addresses an orthogonal dimension of scaling:

- DSA: scaling attention over long context

- mHC: gradient stability at extreme depth

- Engram: intelligent organization of memory

- MoE: the background pattern for compute scaling

This is not a collection of independent hacks. It is an attempt to rethink architecture at every level of the system. Where many reason in terms of “more of everything,” DeepSeek reasons in terms of: the right mechanism in the right place.

V4 should be released in the coming days. Benchmarks will tell me whether it runs fast. What I am waiting for is the technical report, to see whether it changes how future LLMs are built.

Resources

Engram

ArXiv paper: Conditional Memory via Scalable Lookup (2601.07372)

Separates static memory ($O(1)$) from dynamic reasoning.

mHC

ArXiv paper: mHC: Manifold-Constrained Hyper-Connections

Stabilizes gradients in very deep models.

MLA

Code (GitHub): FlashMLA Repository

Optimized CUDA kernels for compressed attention (KV cache).

DSA

ArXiv report: DeepSeek-V3.2: Pushing the Frontier of Open Large Language Models

FP8 indexing for ultra-fast content-aware attention.

V3 / V3.2

Model weights: DeepSeek-V3.2 on Hugging Face

The current production base already integrating MLA and DSA.

AiBrain

AiBrain